Why Are Data Contracts Becoming Mandatory For Engineering Teams?

Your data pipeline has no rules. That’s the problem.

Three dashboards. One metric. Three different answers.

This is rarely a reporting problem. It is almost always a contract violation that went undetected.

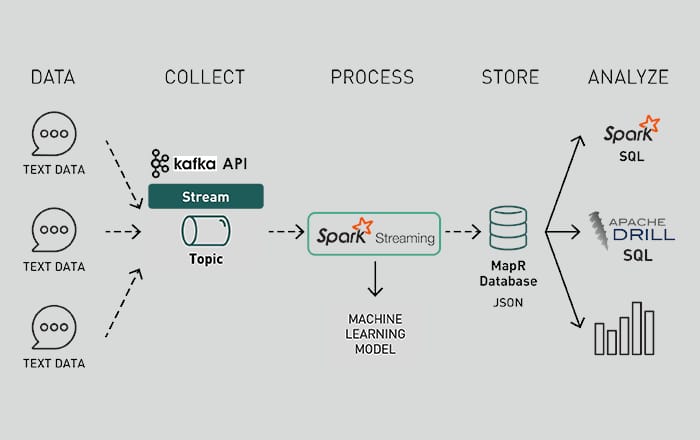

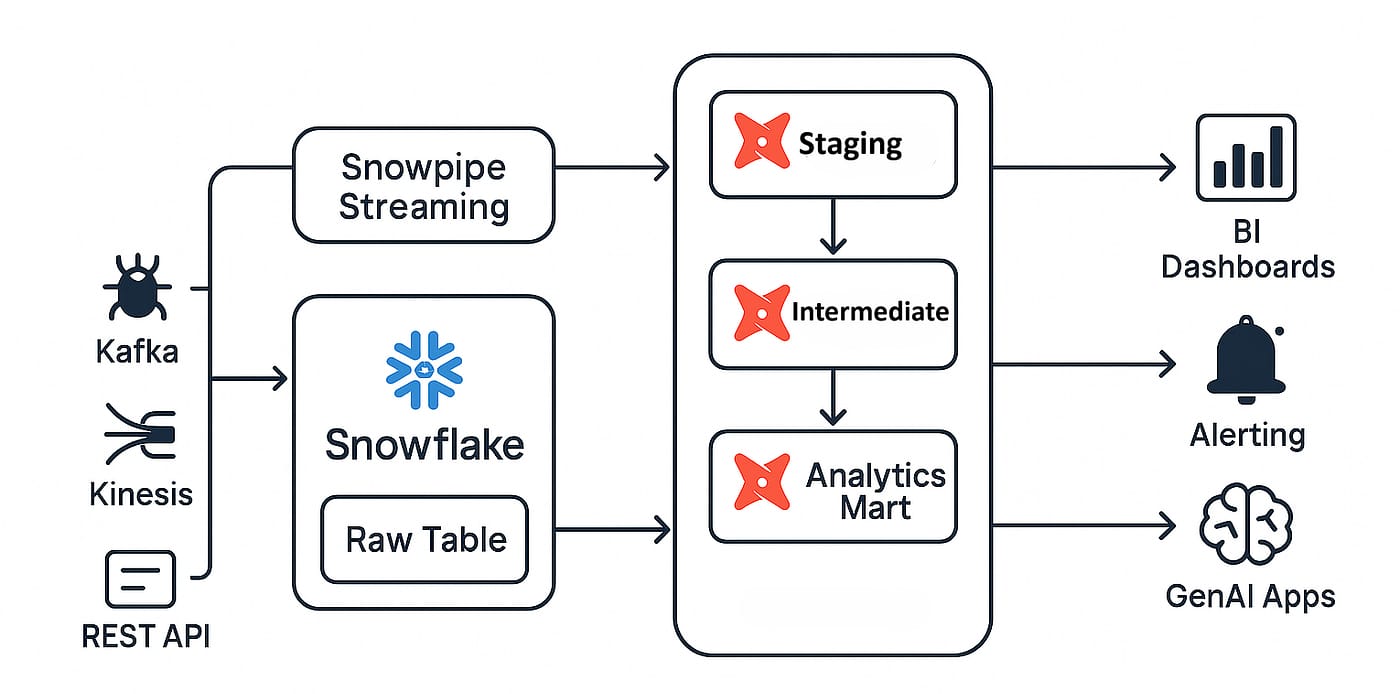

In most modern stacks, data flows through a chain that looks roughly like this: event generation → ingestion (Kafka / Kinesis) → storage (data lake or warehouse) → transformation (dbt / Spark) → consumption (BI tools, models, APIs).

At every step, there are implicit assumptions about schema shape, field semantics, and update behavior.

The problem is that these assumptions are not enforced at the boundaries where they matter.

So when a metric diverges across dashboards, what you are seeing is not inconsistency in reporting. You are seeing different parts of the system operating on different versions of the same contract.

Where systems actually break

Most failures are introduced upstream but only surface downstream, and by then the signal is too weak to trace.

Consider a simple upstream change:

A producer changes a field from int to string to accommodate a new format

Or introduces a new enum value without backfilling historical logic

Or switches from event-time to processing-time timestamps

If you are using something like Apache Kafka with a schema registry (Avro / Protobuf), compatibility rules may technically pass. Backward compatibility often allows adding optional fields or widening types.

However, “compatible” at the schema level does not mean “correct” at the semantic level.

Downstream systems react differently:

A Apache Spark job may silently cast types or drop malformed rows depending on configuration

A dbt model may continue to run but produce different aggregations because a CASE condition no longer matches

A BI layer may cache results or recompute metrics using slightly altered logic

None of these fail loudly. They degrade.

This is how you end up with partially updated partitions, skewed aggregations, silent null inflation, and duplicated records due to idempotency breaks

And because the pipeline is still “green,” no one investigates until numbers are questioned.

The limitation of schema-first thinking

Most discussions around data contracts focus on schema enforcement: field presence, types, and structural validation.

That is necessary, but insufficient.

Real-world data issues are rarely schema violations. They are violations of semantic contracts (what a field actually represents), temporal contracts (when data is expected to arrive and stabilize), and distributional expectations (how data behaves statistically over time).

For example:

A revenue field can remain a float and still be wrong because discounts are now applied upstream

A timestamp field can be present but shifted due to timezone normalization changes

A join key can exist but lose uniqueness, breaking downstream aggregations

None of this is caught by basic schema validation.

Why most data contracts fail in production

In practice, data contracts are rarely implemented as enforcement mechanisms. They exist as static definitions in the form of JSON schemas, dbt tests, or documentation layers in data catalogs. While these artifacts capture intent, they do not actively govern how data evolves once systems are in motion.

Enforcement, where it exists, is typically batch-oriented and delayed. By the time a dbt test fails, the data has already been ingested, transformed, and in many cases consumed by downstream dashboards or models.

At that stage, the system is no longer preventing errors but tracing them after they have propagated. Debugging becomes an exercise in reconstruction rather than control.

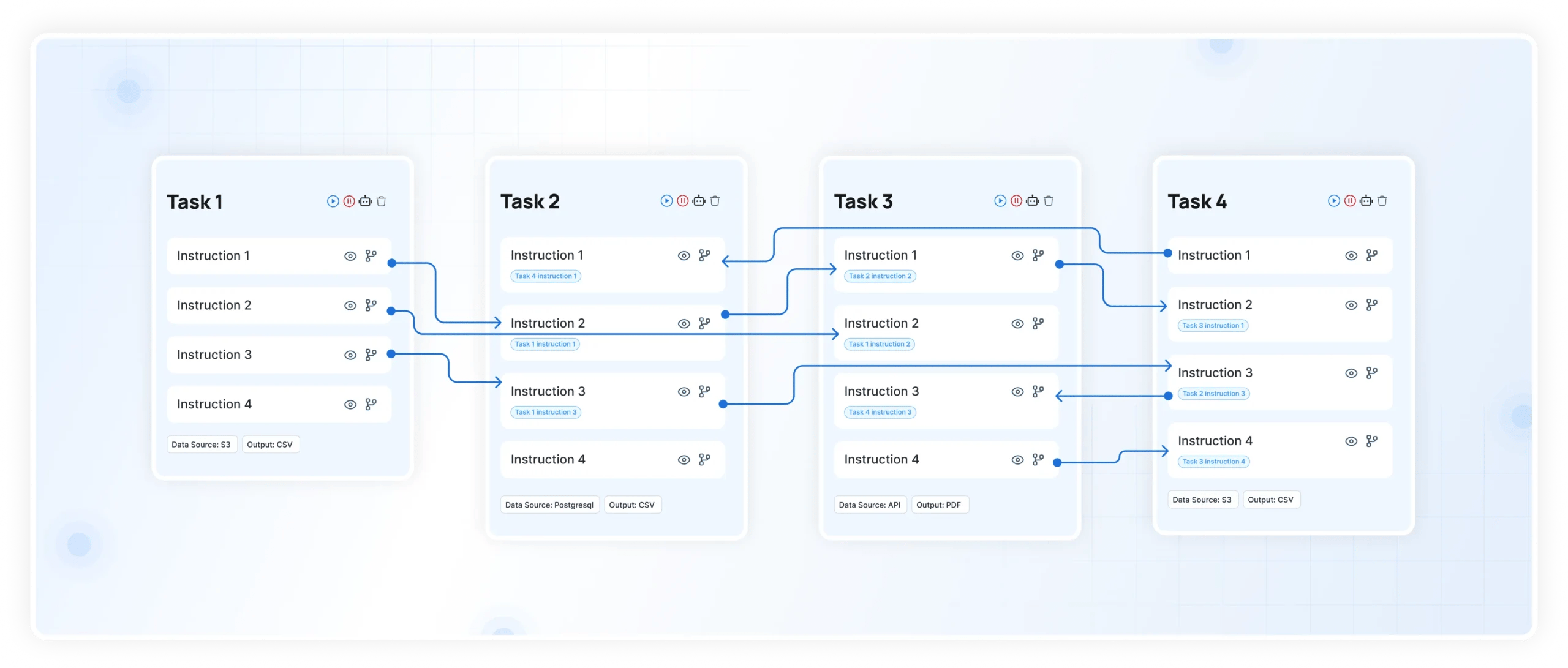

A more fundamental issue is the absence of impact awareness. Most data systems do not provide producers with visibility into how their changes affect downstream dependencies. A seemingly minor modification, such as altering a field or adjusting its semantics, can cascade across tables, dashboards, and decision layers without any immediate signal.

Since these dependencies are not surfaced at the point of change, producers optimize for local correctness while unintentionally introducing global inconsistencies.

This is why many changes appear rational in isolation but become disruptive at the system level. Without continuous enforcement and dependency visibility, data contracts remain descriptive rather than operational, and the system continues to drift despite having them in place.

What enforcement actually requires

To make data contracts meaningful, enforcement has to happen at three levels:

1. Interface-level validation (at ingestion boundaries)

Every producer change should be validated against schema compatibility, required fields, and basic constraints.

This is where schema registries help, but they need to be extended with stricter rules than default backward compatibility.

2. Pipeline-level guarantees (during transformation)

Transformations need explicit assertions around null thresholds, uniqueness constraints, and referential integrity.

For example, a fact table join should fail if key cardinality changes beyond a threshold, instead of producing inflated aggregates.

3. Behavioral monitoring (post-deployment)

Even if schema and transformations pass, systems need to track distribution shifts, anomaly detection on metrics, and drift in join outputs. This is where most stacks are weakest.

Where AI Tools Fit In This Workflow

The gap is not visibility. It is continuous enforcement with context.

DataManagement.AI is very useful in this scenario especially when it operates across layers:

It tracks lineage end-to-end, so a field-level change can be mapped to downstream tables, dashboards, and models

It enforces constraints in near real time, not just in batch validation cycles

It monitors statistical properties of data, not just structure

For example:

If a primary key suddenly loses uniqueness, it can flag the exact upstream change responsible.

If a distribution shifts, it can correlate it with a deployment or schema modification.

If a field is dropped or renamed, it can identify every dependent asset before the change propagates.

This shifts the system from reactive debugging to pre-deployment validation and controlled change management.

The cost of getting this wrong

At scale, unreliable data is not just a quality issue. It is a systems problem.

Every silent failure introduces reprocessing overhead, manual reconciliation loops, and delayed decision cycles.

Over time, teams compensate by building parallel logic, duplicating pipelines, and hardcoding fixes.

This is how data platforms become complex, fragile, and expensive to maintain.

Warm regards,

Shen Pandi & Team