The Real Reason Your Analysts Keep Rebuilding the Same Metrics

Most analysts do not lose time on query authoring. They lose it during dataset qualification. Before writing SQL, they need to determine which table is production-grade, which model reflects the latest business logic, whether the schema has drifted since the last reporting cycle, and whether downstream consumers still treat that dataset as authoritative.

In mature warehouses, the constraint is rarely data availability. It is namespace saturation. The same logical entity is often materialized across raw ingestion layers, normalized staging models, domain marts, one-off backfills, archived snapshots, and manually patched “final” tables that preserve overlapping schemas but encode different transformation logic.

At that point, SQL is not the bottleneck. The bottleneck is validating which dataset has stable semantics, trusted lineage, and current business relevance before it is safe to query.

Most dataset discovery is still happening through tribal memory

In most organizations, dataset discovery still operates as an unstructured dependency on institutional memory rather than system-level metadata. Analysts rely on Slack threads, tribal handoffs, and prior query history to determine whether “revenue_final” superseded “revenue_v2,” whether “customer_latest” is still actively maintained, or which schema finance has implicitly standardized for reporting.

This creates a metadata resolution bottleneck that is often mistaken for collaboration. The warehouse is technically queryable, but dataset selection still depends on undocumented ownership, incomplete lineage, and assumptions about trust that are never codified into the retrieval layer.

That is why metric duplication becomes systemic. When dataset reliability cannot be inferred programmatically through lineage, freshness, usage context, or quality signals, analysts default to rebuilding logic locally because reconstructing a model is often operationally cheaper than validating whether an existing one is safe to reuse.

The cost compounds long before anyone notices it

Once dataset discoverability degrades, local model reimplementation becomes the default execution path. Analysts stop reusing existing assets and begin rebuilding them because validating whether a dataset is safe to trust becomes more expensive than recomputing it.

What this usually looks like in practice:

Join graphs are rebuilt even when equivalent logic already exists upstream

Derived tables are re-materialized with slightly modified predicates or filters

Parallel semantic models emerge because trust, freshness, and lineage cannot be verified programmatically

This is how warehouse sprawl becomes systemic. Each local workaround introduces another partially redundant transformation layer, another non-canonical semantic definition, and another dependency chain that now has to be maintained independently. Over time, the warehouse remains syntactically queryable, but its semantic surface area becomes increasingly non-deterministic.

At that point, the cost is no longer analyst throughput. It becomes the compounding operational overhead of duplicated transformation logic, fragmented semantic models, and the ongoing reconciliation burden required to maintain consistency across analytically equivalent but structurally divergent datasets.

This is not a documentation problem. It is a retrieval problem

Most teams try to solve this with documentation, catalogs, or naming conventions. Those help, but they still assume the analyst knows what to search for.

The actual problem is retrieval under ambiguity. Analysts are not looking for table names. They are trying to retrieve the correct business definition, with the right lineage, trust level, and downstream usage context.

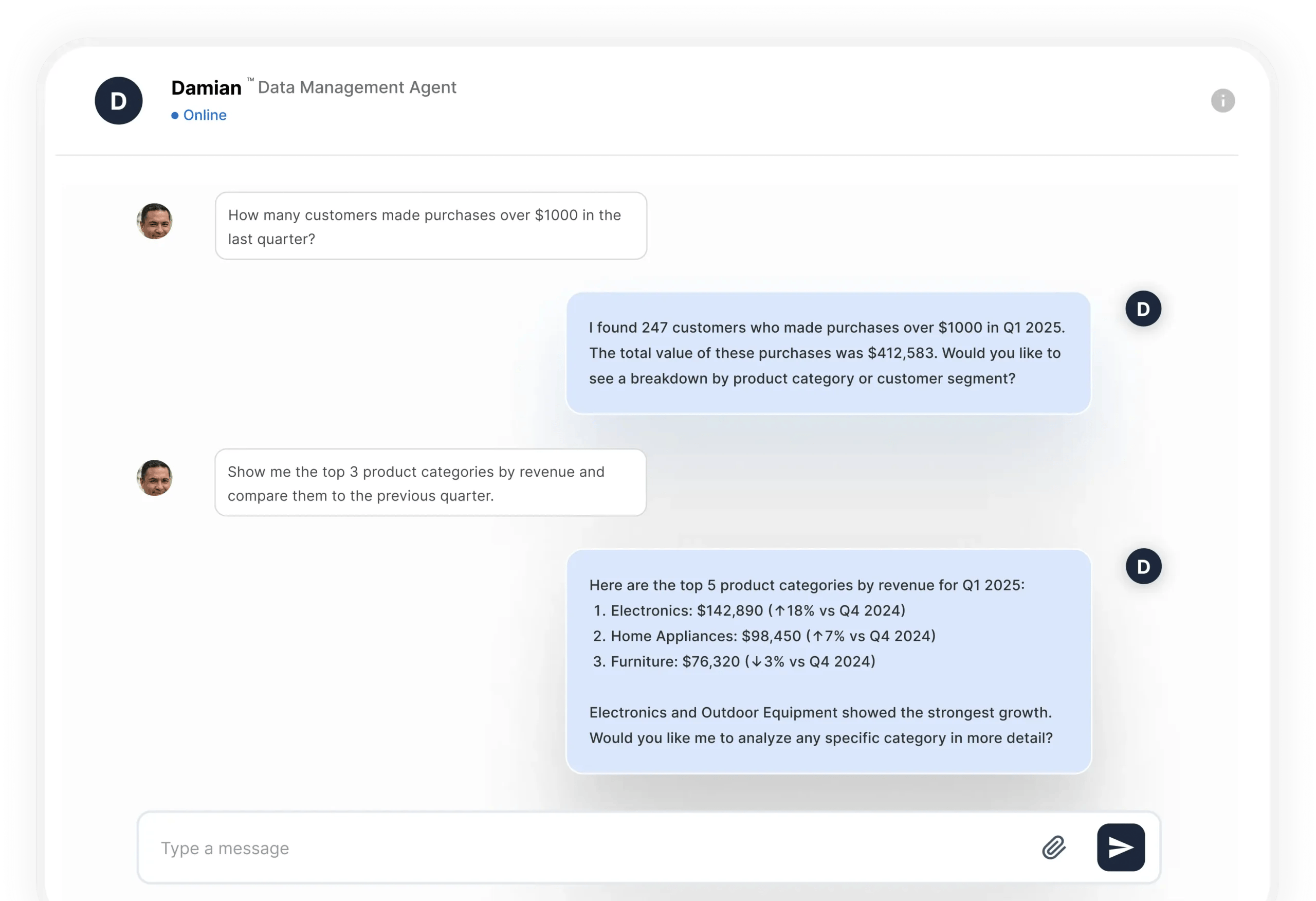

This is where DataManagement.AI’s Damian chatbot becomes operationally useful. Instead of searching schemas manually or asking for context in Slack, analysts can query Damian directly, retrieve the right dataset in context, understand how it is used, and access the relevant business logic without manually tracing warehouse structure first.

Your analysts are not slow - here’s the proof

When analysts spend more time locating trusted data than analyzing it, the problem is no longer productivity. It is warehouse usability. At that point, the warehouse has stopped functioning like an analytical system and started behaving like an unindexed file system where access exists, but retrieval depends on memory, tribal context, and repeated manual validation.

The technical cost is not just slower queries. It is delayed decision cycles, duplicated model logic, and analysts repeatedly reconstructing trust signals that should already exist in the platform.

Finance waits longer for reconciled numbers, product teams rebuild metrics that already exist, and leadership decisions are delayed because no one can verify which dataset is both current and authoritative.

The practical fix is not more documentation. It is making dataset retrieval deterministic. That means exposing trust signals directly at query time, including lineage depth, freshness windows, downstream usage, ownership, and quality status, so analysts can evaluate a dataset before they ever write SQL.

When retrieval becomes metadata-driven instead of memory-driven, analysts stop spending hours proving a dataset is safe to use and start spending that time generating decisions the business can actually act on.

Warms regards,

Shen Pandi & DataManagement.AI team