The One Visibility Problem Killing Your Data Strategy

The uncomfortable truth.

Today's Leak

70% of leaders juggle 5‑7 tools, creating chaos instead of strategy.

Integration maintenance adds 15‑18% to licensing costs; 30% of the time is lost.

Fragmented stacks erode trust and cause 85% of AI projects to fail.

Unified visibility replaces tool‑sprawl with a single source of truth.

Stop managing tools; start delivering fast, trusted answers

You Have 7 Data Tools. So, Why Does Your Business Still Feel Blind? The 70% Crisis You Didn't See Coming

70% of data leaders are managing between five and seven different data tools right now.

That is not a strategy. That is a second job.

You wake up managing customer data in one platform, governance in another, and quality in a third. By lunch, you are troubleshooting why dashboard four doesn't match report five.

You are not managing data anymore. You are managing chaos.

And the worst part? Despite all those tools, you still can't answer one simple question: What is our actual revenue forecast for next quarter?

If this feels familiar, you are not alone. But you are running out of time to fix it.

Stop managing tools. Start managing outcomes. DataManagement.AI gives you the visibility your stack never could.

The Meeting Nobody Wants to Talk About

Here's where it gets messy

It is Monday morning. Your CFO sends a Slack message asking for a unified view of customer acquisition costs across three different product lines.

You have a top-tier tool for master data management. You have a separate, best-in-class solution for data quality. You have a third platform for visualization.

You pull the data from tool A. You clean it in tool B. You push it to tool C.

The numbers are wrong.

So, you call a meeting with your engineering lead. They explain that the API between the quality tool and the master data tool broke over the weekend. Again.

Your team spends the next eight hours fixing the integration.

The CFO gets their report at 5:00 PM.

They look at it, nod, and say, “Great. Can we run this analysis weekly now?”

You want to scream.

This is not a technology failure. It is a visibility failure.

You have all the instruments, but you are flying blind because the cockpit is a mess of wires that don’t connect.

Why Adding More Tools Is Making Everything Worse

You Are Paying for 100 Features and Using 5

The modern data ecosystem was built on specialization. Each vendor solved a narrow problem brilliantly. Data quality here. ETL there. Dashboarding in a third place. Governance in a fourth.

The result? Your stack is a patchwork of overlapping capabilities, each one requiring its own licensing, onboarding, maintenance cycle, and team of specialists who actually understand how it works.

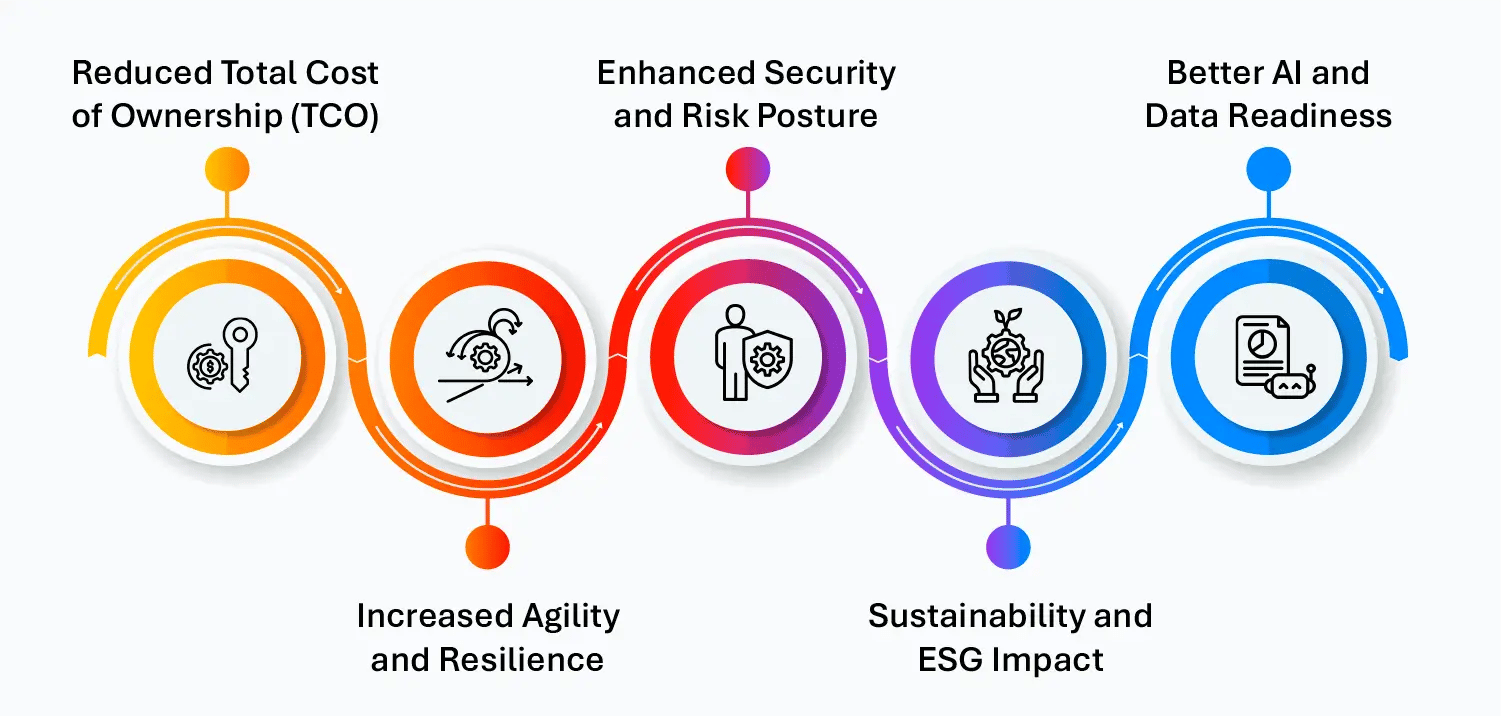

According to industry research, maintenance costs alone add 15 to 18% on top of every licensing contract you sign. If you are spending $2 million on data tooling annually, you are spending an extra $300,000 to $360,000 just keeping those tools alive. That is not ROI. That is overhead dressed as investment.

Your Best Engineers Are Babysitting Pipelines, Not Building Solutions

Here is what your data team's actual day looks like. They are not building products that drive revenue. They are troubleshooting integration failures between tools that were never designed to speak to each other.

Research shows that 40% of data practitioners spend more than 30% of their time jumping between tools just to keep them aligned. That is nearly a third of your most expensive resource being consumed by a problem your tooling is supposed to solve.

When your data engineers become an operations function instead of a value creation function, the entire purpose of your data investment breaks down.

Three Answers to One Question Is Not Insight. It Is a Crisis.

When data lives across siloed systems without a shared governance layer, duplication is inevitable. Business users stop waiting for the integration to get fixed. They copy the data themselves. They build their own spreadsheets. They stop trusting the official sources.

Soon, you have three versions of the truth and no authoritative one. Stakeholders stop relying on data entirely. Decisions revert to gut instinct. And every executive question triggers a week-long data archaeology project instead of a 30-second dashboard lookup.

The Hidden Cost You Are Not Measuring

Tool Overload Does Not Just Cost Money. It Costs Strategy.

Most organizations measure tool costs through licensing fees. That is the visible line item. But the real cost is buried underneath it.

Think through what you are not seeing on your P&L. The cost of your platform team keeping integrations from breaking. The cost of project handoffs where new engineers need months to understand a stack that only three people fully know.

The cost of migrating data assets when a vendor relationship ends. The cost of governance gaps is created every time a new tool introduces a new point of exposure.

A 2024 industry survey found that 40% of data leaders identify integration maintenance as their single highest ongoing cost driver. Not the tools. The glue between them.

Your AI Strategy Is at Risk

Here is a stat worth stopping on. Forbes reports that 85% of AI projects fail. The leading reason is not bad algorithms or wrong use cases. It is a poor data strategy.

You cannot build a reliable AI capability on top of a fragmented, siloed, ungoverned data stack. The foundation is wrong. And adding an AI layer on top of that foundation does not fix it. It multiplies the problem.

If your data is inconsistent, your AI outputs will be too. And in a market where AI is rapidly becoming a competitive requirement, that gap is not just a data problem. It is a business survival problem.

What the Companies Getting This Right Are Doing Differently

They Stopped Asking "What Tool Do We Need?" and Started Asking "What Outcome Do We Need?"

The most effective data organizations in 2025 are reversing the process. Instead of building a stack and hoping it produces useful output, they start with the business outcome and work backward to define the technical execution.

What decision needs to be made? What data needs to support it? What is the simplest infrastructure required to get there reliably?

This approach sounds obvious. But it is the opposite of how most data stacks were built, which was tool by tool, vendor by vendor, over years of reactive purchasing decisions.

They Are Replacing Tool Sprawl with Unified Visibility

The organizations moving fastest are not adopting more point solutions. They are consolidating around platforms that offer end-to-end visibility across data quality, lineage, governance, and usage in a single operating environment.

This is not about ripping out your existing infrastructure. It is about creating a control plane that sits above your current tools and unifies how your team sees, governs, and acts on data. One interface. One source of truth. One version of the answer your CEO is looking for.

When your stack is unified around a single platform layer, something changes immediately. Your engineers stop firefighting. Your business users stop duplicating. Your leadership team stops arguing about which dashboard to believe.

They Are Treating Data as a Product, Not a Pipeline

The most strategically advanced organizations have made a fundamental shift. They no longer think about data as an output of engineering work. They treat every data asset as a product that has an owner, a lifecycle, a defined consumer, and a measurable ROI.

This shift changes everything. When data has an owner, governance improves. When data has a lifecycle, maintenance becomes proactive rather than reactive.

When data has a consumer, the stack gets built around actual need instead of theoretical capability.

This is not a technology change. It is a cultural and operational change. But it is the change that separates organizations whose data creates competitive advantage from those whose data creates compliance risk.

The 5 Signs Your Data Stack Needs a Strategic Overhaul

You do not need to wait for a boardroom crisis to know your current setup is not working. Watch for these signals in your organization:

Your data team spends more time maintaining integrations than building new data products. Business users have stopped trusting dashboards and have started maintaining their own spreadsheets. You cannot answer a straightforward revenue question without reconciling three different systems. Your AI initiatives are stalling because the underlying data is inconsistent. New team members take 3 to 6 months before they can navigate the stack independently.

If two or more of these are familiar, your data stack is not a tool problem. It is a visibility and governance problem.

What Real Visibility Actually Looks Like

Real visibility is not just dashboards. It is not more metrics. It is not another integration sitting between two systems that were never designed to talk.

Real visibility means any authorized person in your organization can find the data they need, trust its accuracy, understand where it came from, and act on it in minutes rather than days.

It means your data engineers are spending their time building products that create business value, not keeping integrations alive.

It means when your CEO asks the same question, your marketing, sales, and finance leads get the same answer from the same source at the same time.

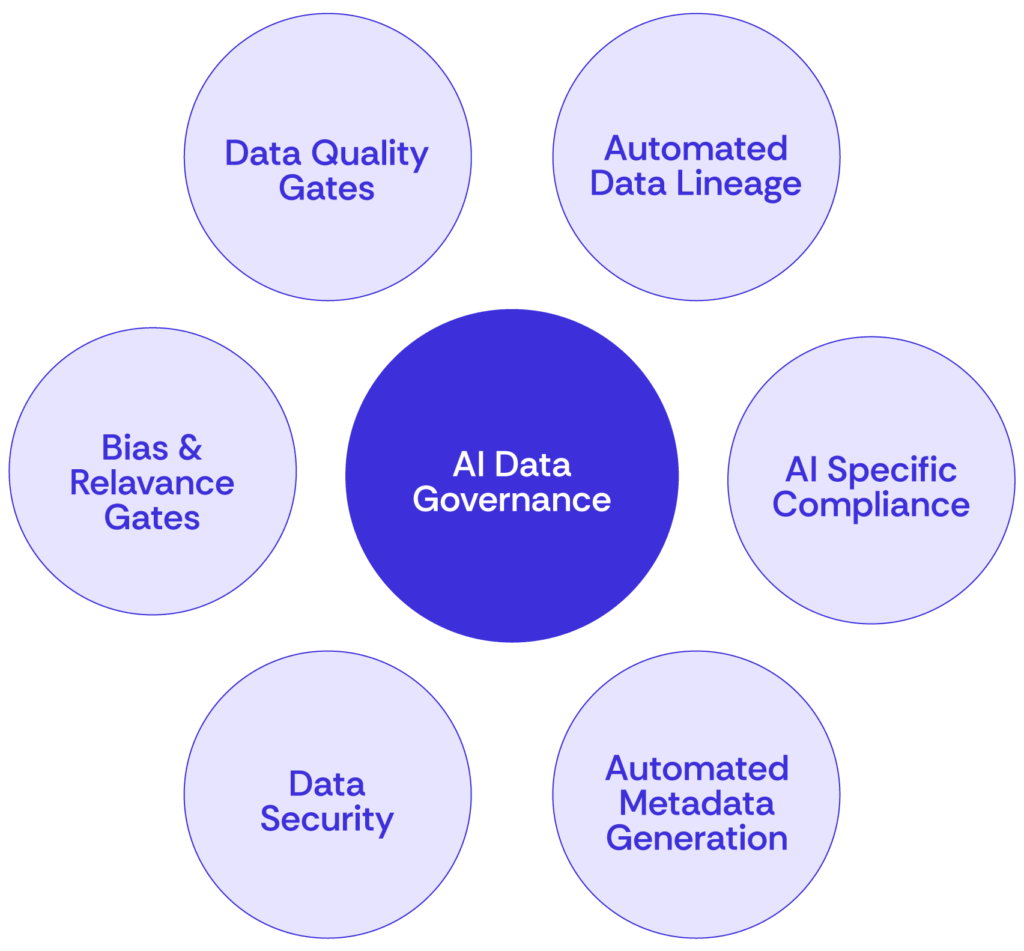

It means your AI initiatives are built on a foundation of clean, governed, lineage-tracked data that you can actually trust.

Most Organizations Don't Know This Is Even Possible

This is where a platform like DataManagement.AI changes the picture entirely.

Instead of adding another tool to your stack, you get a centralized layer that brings real visibility, governance, and lineage tracking across your existing infrastructure. Your team stops juggling disconnected systems and starts operating from a unified environment where data quality, usage, and compliance are all visible in one place.

You get automated lineage tracking, so you always know where your data came from and where it went. You get a shared governance layer, so there is no longer a different rule set for every tool in your stack. You get role-specific visibility, so your CEO sees trends, your data leads see quality signals, and your engineers see pipeline health.

The result is not just operational efficiency. It is organizational confidence. Your leadership team makes decisions faster. Your data team spends time on work that moves the business. Your AI investments start delivering because the foundation finally supports them.

Stop Adding Tools. Start Getting Answers.

Every week you operate on a fragmented data stack is a week your competitors who have unified theirs are making faster decisions, shipping better AI features, and building more trust with their customers.

You already have the data. What you need is the visibility to use it.

See exactly how DataManagement.AI connects your data sources, enforces governance policies, and gives your AI features the reliable foundation they need to perform at their best.

Warm regards,

Shen Pandi & Team