Real-world AI failures that prove the model isn’t the problem

The hidden cost of bad data

Inside most companies building AI, the conversation usually centers on the model.

Teams debate architectures, benchmark scores, and whether a larger foundation model will improve accuracy. But in enterprise systems, the model is only the last step in a much longer pipeline.

AI decisions depend on upstream data flows like transactional records, operational logs, third-party feeds, and structured event streams. Those inputs are then transformed into features the model treats as ground truth.

If the data layer contains distortions such as stale records, schema drift, biased historical data, or corrupted signals, the model does not detect the problem. It simply learns those patterns and scales them across predictions.

That is where most real AI failures originate.

The Zillow Pricing Model That Learned the Wrong Market

If you train a pricing model on distorted data, the model will not question it, it will simply learn the distortion.

That is exactly what happened to Zillow and its home-buying algorithm, Zillow Offers. The model relied on historical MLS data, regional price trends, and transaction velocity to predict resale values for homes the company purchased.

But during the pandemic housing surge, the underlying data shifted faster than the model’s training window. Their feature pipelines kept feeding lagging price signals into the valuation model, which assumed liquidity and demand would remain stable.

Thus, the algorithm systematically overestimated resale prices in several markets.

Because the system was tied directly to acquisition decisions, the error propagated through thousands of purchases, and Zillow ended up holding homes priced above market reality and reported losses exceeding $500 million.

So, the model did not fail, it simply trusted data that no longer represented the market.

Knight Capital’s Data Deployment That Broke a Trading System

Bad data does not always arrive through datasets; sometimes it enters through configuration.

In 2012, Knight Capital deployed updated trading software across its production servers. But, one server still contained an old configuration flag connected to legacy routing logic known internally as “Power Peg.”

The updated system interpreted that flag as a new automated trading instruction.

So, when the market opened, the system began generating orders based on incorrect state data flowing through the trading engine. Within 45 minutes, the algorithm executed millions of unintended trades across 150 stocks.

The firm accumulated roughly $440 million in losses before engineers shut the system down.

But the algorithm was functioning exactly as coded. The problem was that one piece of stale operational data changed the meaning of the entire system.

When AI Misreads Reality

If you deploy a computer vision system on imperfect visual data, the model will not pause to question what it sees. It will classify the pattern and move on.

That is the challenge police departments encountered with automated license plate recognition systems built by Flock Safety.

The platform uses computer vision models trained on large datasets of plate images to detect and match vehicles against law-enforcement databases in real time.

In production environments, however, plates are rarely clean inputs. Dirt, glare, motion blur, and inconsistent fonts introduce ambiguity at the pixel level.

In one incident involving Brandon Upchurch, the system misread the letter “O” as the number “0,” triggering a false match in the database.

Officers treated the alert as high-confidence intelligence and conducted a high-risk stop before realizing the plate had been misclassified.

What This Means for You

If you decide to build an AI system on bad data, the problem will not show up immediately.

Your model will still train. The accuracy metrics may even look decent, and the demo will work as well.

In the first few weeks, everything will appear normal, but then the small cracks begin to appear.

The recommendations start drifting in strange directions, the predictions will feel slightly off. And, eventually your team will have to spend hours adjusting prompts, retraining models, and tweaking parameters, convinced the algorithm needs improvement.

But the algorithm is not the thing quietly failing, your data is.

When the dataset contains noise, bias, missing fields, or outdated information, the model learns those flaws as if they were facts.

Then the scale begins to matter.

Because AI systems do not make one decision. They make thousands, sometimes millions. The same flawed pattern repeats again and again across your product, your analytics, and your automated workflows.

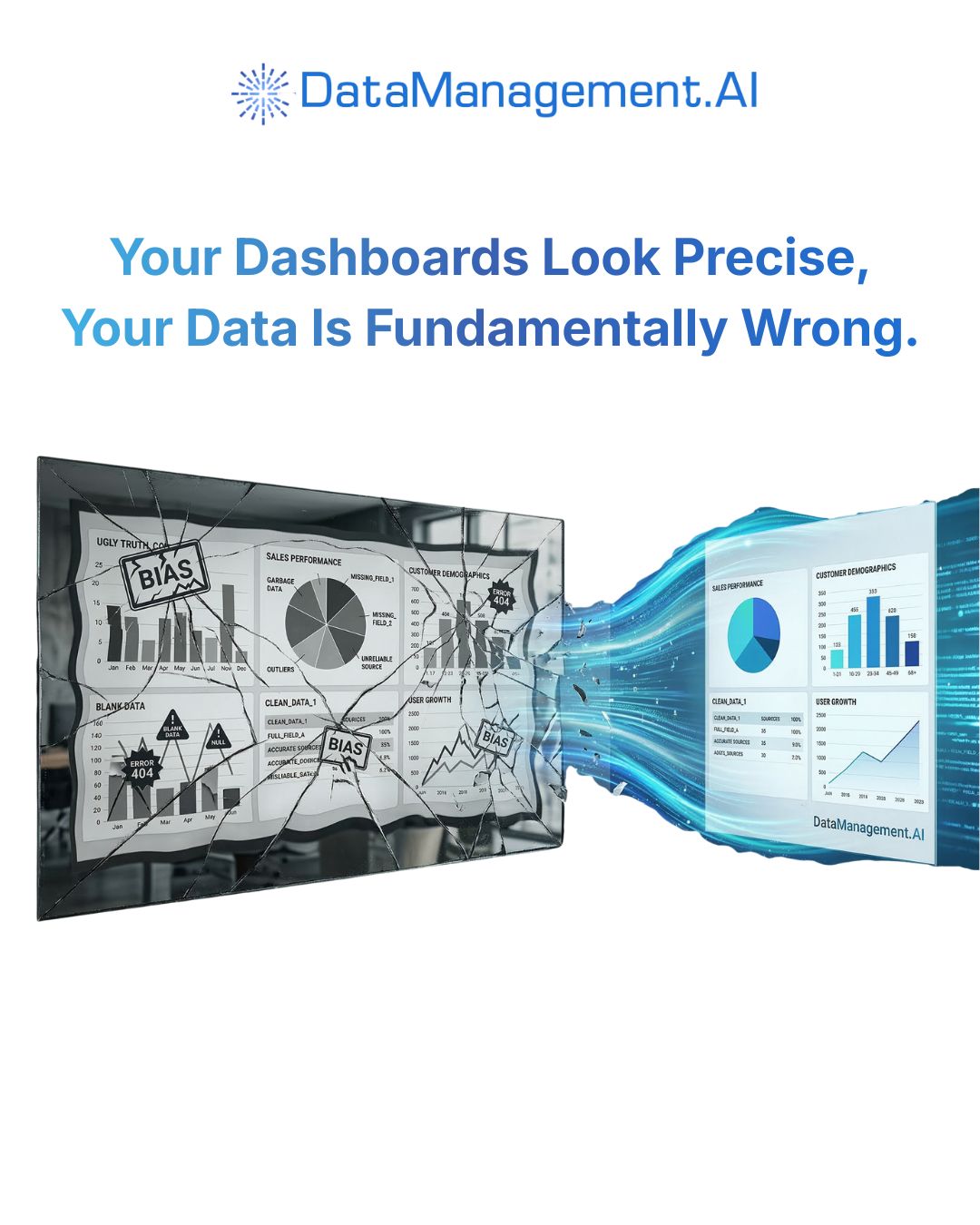

Soon your dashboards reflect a distorted version of reality. Teams start making decisions based on outputs that look precise but are fundamentally wrong.

And by the time someone traces the issue back to the training data, the consequences are already embedded in reports, strategies, and product choices.

That is the real risk of building AI on bad data.

Join Us in Advancing Data Management!

We invite you to experience the power of Chain-of-Data firsthand.

Ready to take the leap?

Don't let outdated approaches hold you back.

Embrace the future of data management with DataManagement.AI by not building data pipelines.

Warm regards,

Shen and Team